Best practices: Machine learning

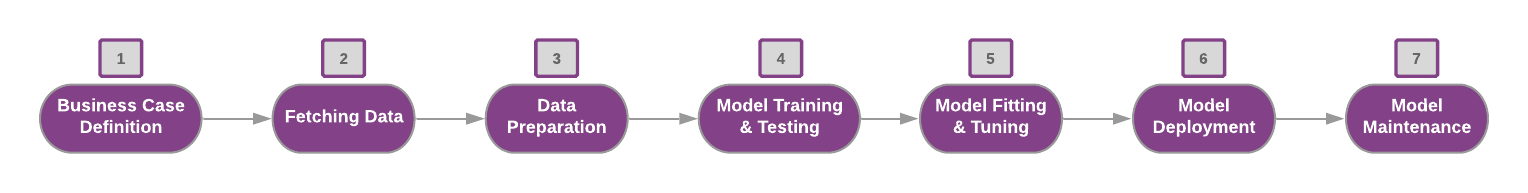

This is the machine learning product life cycle:

Components overview

These are the components:

- Datasets: A dataset contains the data that is used as input in the quest.

- Quests: A quest is the flow of activities that build the machine learning model.

- Endpoints: An endpoint is the deployed model that provides predictions.

Business case definition

The first step towards a successful project is to define the business problem.

Understand the domain, set clear goals and evaluation criteria for your project. Determine all the possible aspects that the project can provide.

Ask these questions:

- What is the current process related to the business case?

- Would machine learning specifically help with this issue? If so, how?

- Will the project contribute to these objectives?

- Save Time: time that could be re-invested into other initiatives.

- Save Money: improving accuracy and efficiency across industries. For example, a

predictive maintenance use case can save money from customer attrition rates and human

effort for maintenance. Additionally, a recommendation engine use case can increase

brand loyalty and customer engagement.

Improving Customer Experience: demonstrating customer commitment can result in customers being more likely to choose you over competitors. Additionally, customers are more loyal when their experience is tailored to their individual needs. Machine learning can make this kind of customer experience available without radically increased human costs.

Fetching data

A dataset contains the data that is used as input. A dataset can be uploaded from your local system or imported from Data Lake.

When uploading from file, the datatypes in the metadata section are estimated automatically based on a sample of the data. Check the estimations and adjust accordingly if there are discrepancies.

There are cases when the Date/Timestamp datatypes from the file are not recognized correctly by the metadata guesser, as there are too many format variants. For these cases, you can manually specify the Date/Timestamp format of the respective dataset.

Data preparation

You must have a good understanding of the dataset. Investigate and understand its structure.

Data must be transformed in a way that it can be understood and consumed by algorithms. The data generated in business applications is generally incomplete. It is lacking attribute values, lacking certain attributes of interest, contains only aggregate data, is noisy (contains errors or outliers), and is inconsistent (contains discrepancies in codes or names).

The data must be formatted, cleaned, and organized before feeding it into the model training.

Answer this question: What are the inconsistencies and defects in the data that need to be resolved?

These are common pre-processing practices:

- Cleaning: assign or remove missing values, smooth noisy data, identify or remove outliers, resolve inconsistencies

- Transformation: normalization and aggregation

- Reduction: reduce the volume but produce the same or similar analytical results

- Discretization: replace numerical attributes with nominal ones

After each transformation you can save the resulting dataset to use it in another quest.

Model training and testing

Consider these questions for model training and testing. Use the Algorithm Quick Reference inside the application to help in these decisions.

- Are you going to let the model learn by itself (unsupervised learning), or are you going to guide the ML training through (supervised learning)?

- Is the problem type a classification, regression, clustering problem, or something else?

- Which modeling approach is suitable for your use case?

- Iteration: How many times is your model going to run through the training dataset?

If the dataset is very large, the training process may take a long time.

Model fitting and tuning

Generating value from the model is a process that requires time and adjustments.

Consider how you measure the model performance. After you have an understanding of successful model structure and approaches for your problem, focus on maximizing performance gains from the model.

These are strategies to improve the performance of your machine learning model:

- Review the data source. Is this the best data source for your model? Are there any drawbacks in the selected dataset? If not, consider increasing the sample size.

- Try changing the type of classifier/regressor objective in the algorithm hyperparameters for different results.

- Fine-tune the algorithm's hyperparameters.

- Try a different machine learning algorithm in parallel and compare the results.

- Try different proportions of training and testing data subsets.

- Refine the training process by trying to increase the number of iterations through the dataset. Keep in mind this will slow down the training process.

Iterate on a section until reaching a satisfactory level of performance.

Model deployment

After the model is trained, scored, and evaluated inside the training quest, you must choose the activities that correspond to the best model and move forward with them into the production quest for the final test before deployment.

Model maintenance

Get feedback from real-world interactions and redefine the goals for the next iteration of deployment.

Eliminate unnecessary features. Regularly evaluate the effect of removing individual features from a given model, because unimportant features add noise to your feature space. A model's feature space should only contain relevant and important features for the given task.

Over time, as the input data distribution changes, the model's performance may weaken. Schedule a periodical model retraining, so that it has always learned from the most recent data to prevent model staleness. Set the schedule based on your data variations; a period after which you would expect different insights from the model.

Set an automatic scheduled model retraining in ION Workflow.